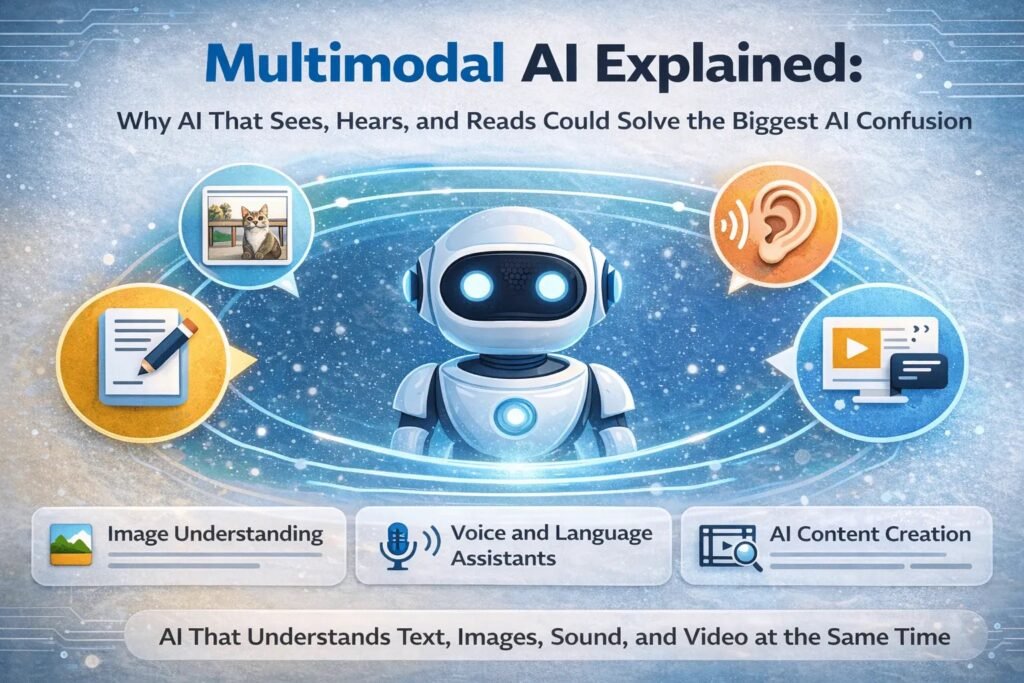

Multimodal AI Explained: Why AI That Sees, Hears, and Reads Could Solve the Biggest AI Confusion

Multimodal AI allows artificial intelligence to understand text, images, audio, and video at the same time. This article explains how it works and why it may solve many common misunderstandings about AI.

Multimodal AI combines text, images, audio, and video to help artificial intelligence understand information more like humans do. This technology is shaping the next generation of AI systems.

Introduction

Many people still imagine artificial intelligence as a system that only reads text or answers questions. But modern AI is quietly evolving into something far more capable.

A new generation of systems called multimodal AI can process images, audio, video, and text together — almost the way humans naturally understand the world.

This shift may solve one of the biggest misunderstandings about artificial intelligence: the belief that AI “knows things” the way people do. In reality, traditional AI tools have always been limited by the type of data they can understand. Multimodal AI begins to change that.

What Is Multimodal AI?

Multimodal AI refers to artificial intelligence systems that can process multiple types of input at the same time.

Instead of working with just one type of data, multimodal models can combine information from several sources.

For example, a multimodal AI system might:

– Read a written question

– Look at an image

– Listen to an audio clip

– Analyze a video

Then it combines these signals to generate a response.

Humans naturally process information this way. When we talk to someone, we don’t rely only on words. We also read facial expressions, tone of voice, and visual context.

Multimodal artificial intelligence tries to imitate this broader understanding.

Why Traditional AI Often Feels Limited

To understand why multimodal AI matters, it helps to look at how most earlier AI systems worked.

Traditional AI models were usually trained on one type of data only.

Examples include:

– Text-based models that only read and write language

– Image-recognition systems trained only on pictures

– Speech models designed only for audio

Each system operated in its own narrow world.

This separation created a strange limitation: AI might recognize an object in a photo, but it could not easily explain the meaning of that image in context.

For example, a model could identify a cat in an image, but it might struggle to answer questions like:

“Why is the cat hiding under the table?”

Understanding that situation requires combining visual clues, common sense, and language.

Multimodal AI attempts to bridge these gaps.

How Multimodal AI Actually Works

Behind the scenes, multimodal artificial intelligence relies on models trained across multiple data formats simultaneously.

These systems learn relationships between different types of information.

For instance:

– Words that describe objects

– Images that represent those objects

– Sounds connected to certain actions

– Videos that show sequences of events

By linking these signals together, the AI builds a broader representation of the world.

Instead of treating text, images, and sound as separate problems, the system learns how they interact.

This is why modern AI models can do things that once seemed impossible, such as:

– Describing a photograph in natural language

– Answering questions about a chart or diagram

– Understanding both spoken instructions and written prompts

– Generating images based on text descriptions

Real-World Examples of Multimodal AI

Multimodal technology is already appearing in tools people use every day.

Some examples include:

Image Understanding

AI systems can now analyze photos and explain what they show.

This capability is useful in fields such as:

– medical imaging

– accessibility tools for visually impaired users

– document analysis

Voice and Language Assistants

Modern assistants increasingly combine speech recognition with language understanding and contextual reasoning.

This allows them to interpret commands more naturally.

AI Content Creation

Some AI tools can generate images from text prompts or create captions for videos automatically.

These systems rely on multimodal training that connects visual and linguistic data.

Why Multimodal AI Matters for Everyday Users

For many people, AI still feels confusing or unpredictable. One reason is that older systems lacked context.

Multimodal AI helps reduce this problem.

When artificial intelligence can analyze multiple signals at once, its responses often become more relevant and practical.

Consider a simple scenario.

A user uploads a screenshot of a software error and asks:

“What does this mean?”

A text-only AI model might struggle to interpret the problem. But a multimodal system can read the image, understand the message, and explain it.

This makes AI tools feel less like abstract technology and more like practical assistants.

Does Multimodal AI Make Artificial Intelligence Smarter?

This is where the conversation becomes more nuanced.

Multimodal AI does not suddenly give machines human intelligence. What it does is expand the range of information AI can process.

Think of it less as intelligence and more as sensory expansion.

A text-only AI model is like a person who can only read books but cannot see or hear.

A multimodal model, by contrast, gains additional “senses.” It can observe images, interpret speech, and connect those signals with language.

This broader perspective allows the system to respond in more useful ways.

Common Misunderstandings About Multimodal AI

“AI understands everything now.”

Not quite. Even multimodal systems still rely on patterns in training data.

They do not possess true understanding or consciousness.

“Multimodal AI will replace most jobs.”

History suggests technology usually changes jobs rather than eliminating them entirely.

Multimodal AI may automate certain tasks, but it also creates new roles related to AI supervision, design, and integration.

“Only tech experts can use AI tools.”

One of the surprising trends in AI development is how quickly tools are becoming accessible to non-experts.

Multimodal interfaces — combining images, voice, and text — may actually make AI easier for beginners.

The Future of Multimodal Artificial Intelligence

Looking ahead, multimodal AI will likely become the default approach for many AI systems.

Future models may combine even more forms of information, including:

– real-time video

– environmental sensors

– location data

– interactive feedback

This could enable AI systems that assist with education, healthcare, research, and creative work in more intuitive ways.

But progress will also raise important questions about privacy, data quality, and responsible AI development.

These challenges will shape how the technology evolves.

Final Thoughts

Multimodal AI represents an important step toward making artificial intelligence more useful and understandable.

Instead of focusing on a single type of data, these systems bring together language, images, sound, and video to build a broader view of the world.

That shift may help solve one of the biggest frustrations people have with AI: the feeling that machines respond without real context.

Multimodal systems are not perfect, and they are far from human intelligence. But they move AI closer to how people actually experience information — through multiple senses working together.

For anyone trying to understand where artificial intelligence is heading, multimodal AI is one development worth paying attention to.